Educational & Group project

September 2024 – Current

Overview

Unlike the other projects I have completed during my studies at BUas this project spans over 6 months. Each group of students works closely with clients found by our University, taking on multiple roles: data scientists, data engineers and analytics translators; manage both the workload of the project, the scoping and the stakeholders while developing a solution that provides value for the client.

My team chose Specifix, a medical AI company that aims to create Data and AI based tools to help surgeon perform fracture reductions faster, and more reliably. Specifix focuses on fractures of the distal radius bone.

We decided to take on multiple parts of the pipeline at the same time, dividing our efforts into two groups, aided by a Minor student with domain knowledge in the field of medical imaging. My chosen role in this project is data scientist, working together with my team to train different models to achieve our goal. Due to the 3D data we are working on, I also found myself acting as a domain expert in 3D modeling and dynamics.

Dataset

Due to privacy concerns we signed NDAs and agreed not to disclose or share anything specific regarding the data. The initial dataset consisted of multiple CT scans of distal radius bone. For a smaller part of the dataset we had the contralateral as a ground truth for the fracture reduction.

Approach

We chose to separate the team into two so we can tackle different parts of the project in parallel. By doing this we can shift our focus and achieve more in a shorter time, present to stakeholders, receive feedback that workout which parts of the project stand to provide the most value.

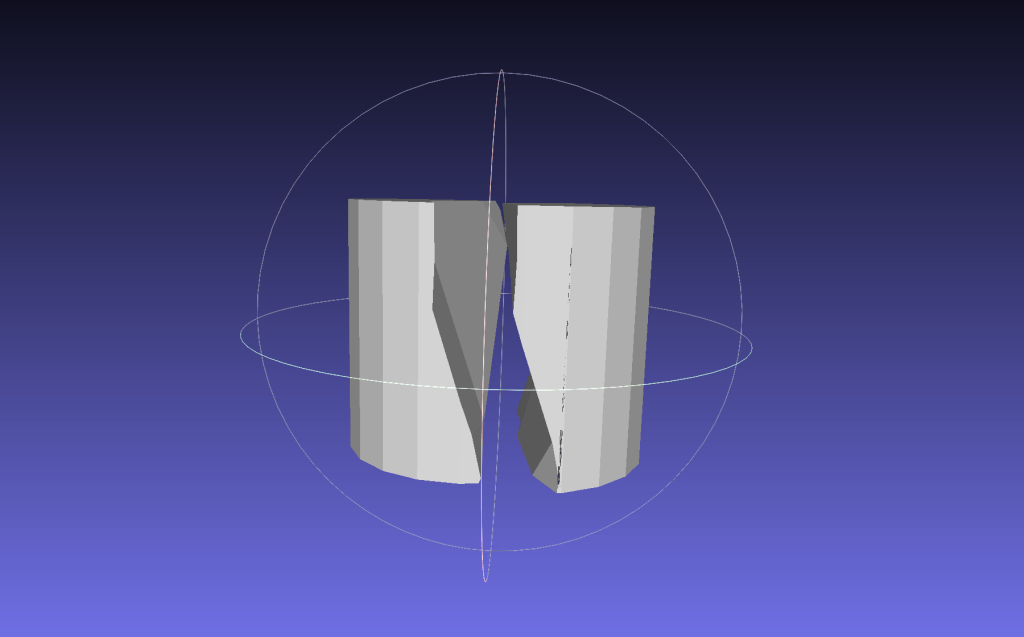

I was part of the team that tackled a variational autoencoder, in order to create a shape model of the fractured bone which can be used as ground truth for a reinforcement learning model that can perform the fracture reduction in a virtual environment.

Both of these were new to us at the start of the project so we decided to perform multiple small scale experiments and move up to the current task.

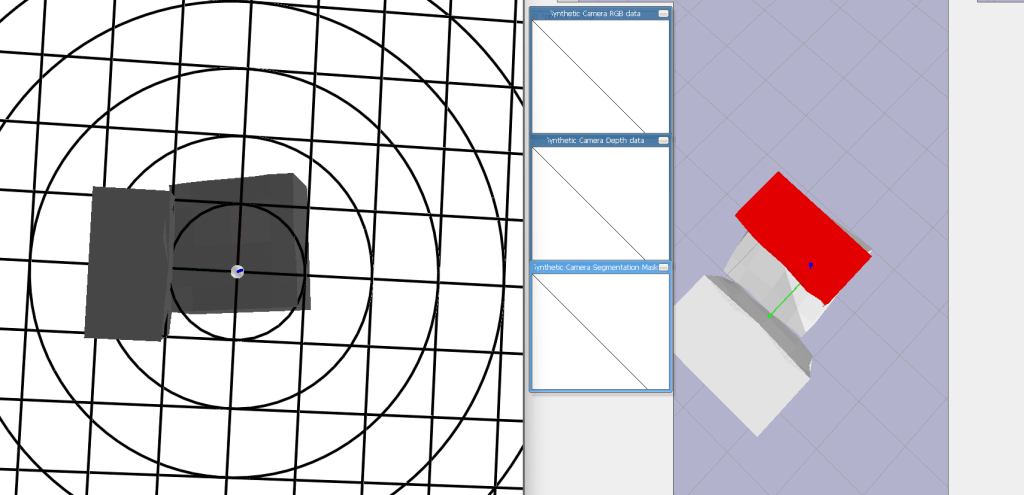

Initially my main task was programmatically creating both 2D and 3D datasets of fractured shapes, using a randomly generated noise map to displace a plane as a cutting shape. By starting with primitive objects (i.e: cube, sphere,cylinder) of different sizes, with randomly rotated and displaced fractures we aim in the end to be able to use the contralateral bones, create fractures in order to support the real data with synthetic data.

After creating the dataset generator I moved to supporting my other teammate in both researching the variational autoencoder and developing the architecture, but also in creating the reinforcement learning environment and defining the reward function.

Results

*Due to the NDA I am limited in what I can show*